Process So Far

So far, I really have been working on experimenting with simple fractal animations in processing, and exporting / importing sketches from processing. I have been able to create sketches in Android mode within processing, export them and then import them into Android studio. I have also slightly updated my visual design to be consistent with Android UI elements.

One roadblock I’ve been having is changing the size of my processing canvas, but I am slowly learning how to revise my sketch within android studio itself. So while I do more work with this I anticipate being able to figure out canvas sizing.

Also, I realize my app still does not look very Android-y, so I am looking to get some feedback during class discussion about how to make my visual design align more with Android design principles.

Prototype: Flow & Interactivity

As a proof of concept, I made sure I understood how to make processing sketches run in android studio. So far I have an animated processing sketch running in my android emulator. Now, I just have to make sure I can adjust the processing canvas size and incorporate normal android elements (eg text fields, radio buttons, etc) to communicate with variables in my sketch. Here is a link to my Github where I have a simple example working. It is a .zip file containing a processing sketch that I exported, and then imported into android studio. In android studio I deleted the “size” line and then it ran in my simulator no problem!

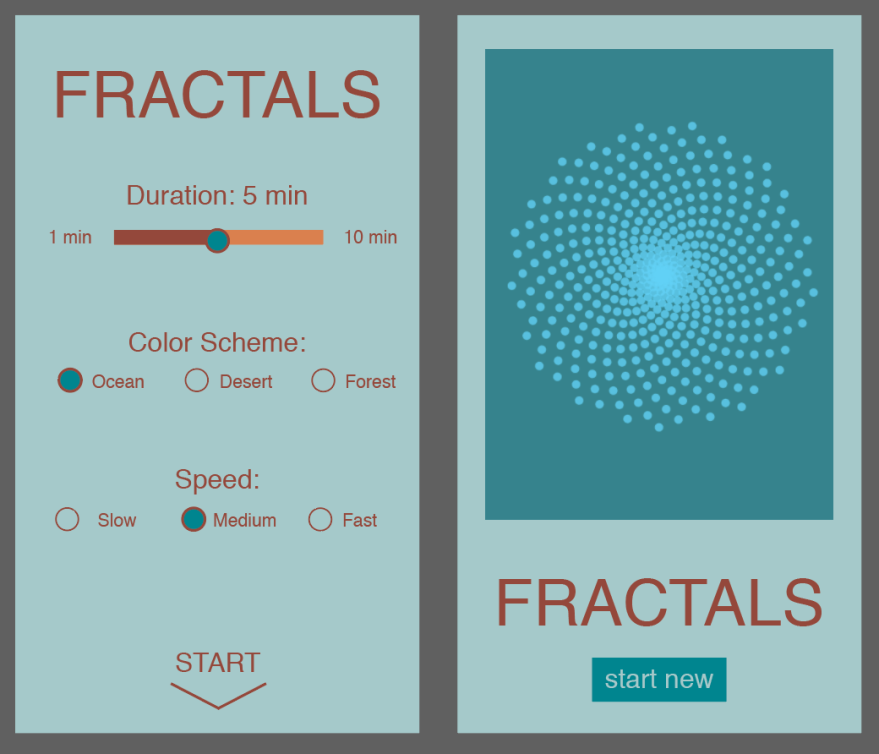

Below are some screenshots of an Illustrator mockup. I updated the slider I had originally to be a seekbar, which is Android’s version of the iOS slider. Also, I changed the generated fractal to be a screenshot from an animated sketch I have running in p5.js. Otherwise, the radio buttons, and buttons should be the same. I’m also currently considering moving away from the scrollview and having my processing sketch in a separate activity from the UI elements. I will get more comfortable importing processing sketches in Android Studio and explore my potential options there.

In addition to my proof of concept and mockup, here is some basic pseudo-code for my app’s algorithm:

// user slides the seekbar to select a duration between 1 and 10 minutes // user selects a color scheme from the three // user selects a speed of animation for the video // user presses start (if either of the radio buttons are unchecked, a toast pops up to remind them // screen scrolls down to show processing canvas // properties of animation (color, duration, speed) are based on user input // to end animation: can either scroll back up as they please or press "start new" button

Content for App

Below is my app icon. I will probably import the spiral as the image and set the blue color as the app icon background color in Android Studio. All additional content for my processing sketch (which I am developing) will come from user input. I from here, I just need to figure out how to connect those two elements: variables in the sketch and values saved in UI elements.